The Disclosure Dilemma. Responsibility vs. Full Disclosure in Cybersecurity

- Dean Charlton

- 2 days ago

- 4 min read

The Architecture of a Secret

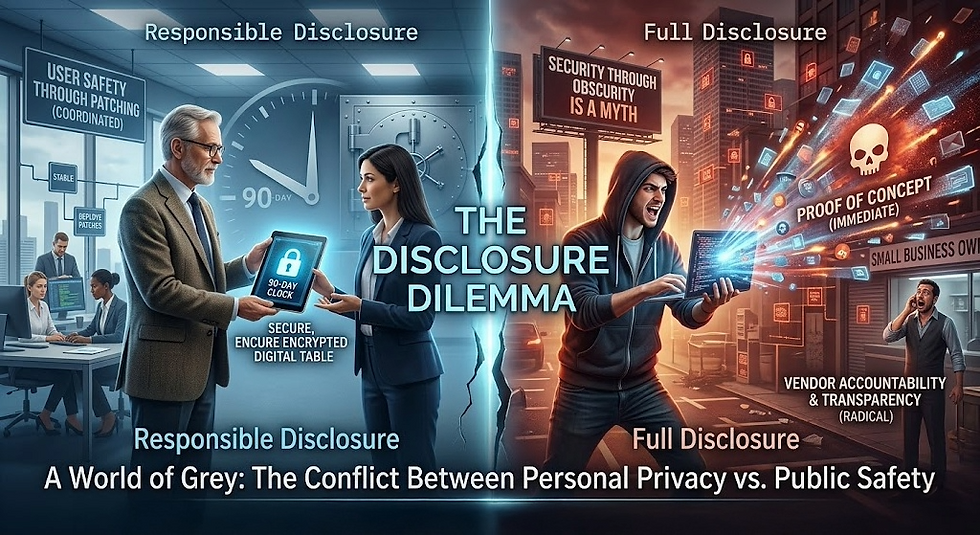

In the digital age, a single line of code can be the difference between a secure banking transaction and a catastrophic data breach. When a security researcher discovers a "zero-day" vulnerability, they hold a form of power that is both technical and deeply ethical. This article explores the two primary methodologies for handling such discoveries, providing an in-depth analysis of the philosophy, history, and impact of each

approach.

The core of the debate lies in a fundamental question: who is the primary beneficiary of information? Is it the vendor, who needs time to repair their product, or is it the user, who has a right to know they are at risk?

This tension has existed since the dawn of the internet, but as our reliance on software grows, the consequences of this choice have escalated from minor inconveniences to matters of national security.

Responsible Disclosure – The Gentleman's Agreement

Responsible Disclosure, often rebranded as Coordinated Vulnerability Disclosure (CVD), is currently the industry standard adopted by major tech giants like Microsoft, Google, and Apple. It operates on the principle of partnership. The researcher discovers a bug, privately notifies the vendor, and provides a "grace period" (typically 90 days) for the vendor to develop, test, and release a patch before any information is

made public.

The Philosophy of Cooperation

The primary argument for Responsible Disclosure is the prevention of harm. By keeping the vulnerability secret, the researcher ensures that malicious actors don't learn about the "door" until a "lock" is ready to be installed. This orderly process is designed to minimise the window of opportunity for attackers. It acknowledges that patching a modern operating system or a complex cloud infrastructure is an arduous task.

A fix must be tested against thousands of configurations to ensure it doesn't cause more problems than it solves.

From the perspective of a Chief Information Security Officer (CISO), Responsible Disclosure is a godsend. It allows a security team to manage the rollout of a patch in a controlled environment, rather than reacting to a chaotic "hair on fire" event where every hacker in the world is trying to exploit their systems at once.

The Critics’ Corner: The Risk of Corporate Inertia

Critics of this model argue that "responsible" is a loaded term that favours corporations over users. When a bug is kept secret, the public is left in a state of "false security." They continue to use a product, unaware that their data is at risk. There is also the significant risk of "silent exploitation." If a researcher found the bug, it is highly likely that a state-sponsored hacking group or a criminal enterprise has already found it too. In this scenario, the vendor is given a 90-day vacation while the users are being actively attacked in the shadows.

Full Disclosure – The Nuclear Option

Full Disclosure is the practice of publishing the details of a vulnerability, including "Proof of Concept" (PoC) code, immediately upon discovery. It is often described as the "nuclear option" of the cybersecurity world, born out of the frustrations of the 1990s when vendors would routinely ignore or sue researchers who tried to

report bugs privately.

The Philosophy of Radical Transparency

Proponents of Full Disclosure believe that secrecy is the enemy of security. They argue that "security through obscurity" is a myth that only serves to protect a vendor's stock price. In their view, the public is already at risk, and the only way to level the playing field is to tell everyone at once. Full Disclosure acts as a forced catalyst. When a bug is public, a vendor can no longer ignore it. The resulting pressure from the media, shareholders, and government regulators often results in "emergency patches" being produced in days rather than months.

"Secrecy is the badge of fraud. If a vendor is not held accountable by public scrutiny, they have no incentive to build secure software from the start."

A common sentiment among Full Disclosure advocates.

The Critics’ Corner: Arming the Enemy

The backlash against Full Disclosure is intense. By publishing the details before a patch exists, the researcher is essentially providing a "how-to" guide for every cybercriminal on the planet. It creates a "Zero-Day" window where millions of systems are defenseless. Critics argue this is an irresponsible approach that ignores the reality of "downstream" users, small businesses and individuals who cannot patch their systems overnight. In this view,

Full Disclosure is less like a warning and more like an arsonist claiming they are

testing the fire department's response time.

The Evolution of the 90-Day Deadline

The 90-day window has become the modern compromise, popularized by Google's Project Zero. This approach attempts to marry the benefits of both worlds. It provides the vendor with a clear, reasonable timeframe for a fix (the "responsible" part) but maintains a hard deadline where the information will be released regardless of whether a patch is ready (the "disclosure" part).

This "carrot and stick" approach has forced a culture shift in Silicon Valley. Vendors now know that they cannot "sit" on a bug indefinitely. However, this has also led to "patch-lite" scenarios where vendors release a quick, incomplete fix just to meet the 90-day deadline, only for researchers to find a "bypass" within hours of the patch's release.

Bug Bounties and the Monetisation of Disclosure

The rise of platforms like HackerOne and Bugcrowd has shifted the debate from ethics to economics. Companies now pay thousands of pounds for "responsible" reports. This has professionalised the industry, but it has also introduced "Non-Disclosure Agreements" (NDAs).

Some critics argue that bug bounties are simply a way for companies to "buy" the silence of researchers, preventing the broader community from learning from the architectural mistakes that led to the vulnerability in the first place.

Conclusion: A World of Grey

As we navigate the complexities of 2026, it is clear that neither extreme is perfect. If we rely solely on Responsible Disclosure, we risk a world of secret flaws and corporate laziness. If we embrace Full Disclosure, we risk a digital landscape of constant, unpatched chaos.

The future of cybersecurity depends on a nuanced middle ground, one where researchers are protected from legal threats, vendors are held to strict

timelines, and the public is eventually informed of the risks they face.

In the end, disclosure is not just a technical process; it is a vital part of the democratic accountability of the digital age.

Comments